A few years ago, the idea of a “threat to the privacy of thought” seemed like dystopian science fiction, like George Orwell’s novel 1984.

LOOK: Writers sue OpenAI for using their works to train the GPT language model without permission

But for Nita Farahany, a professor at Duke University (USA) specialized in researching the consequences of new technologies and their ethical implications, this threat already exists and must be taken seriously.

This year, the Iranian-American professor published the book The Battle for your Brain: Defending the Right to Think Freely in the Age of Neurotechnology (“The Battle for Your Brain: Defending the Right to Think Freely in the Age of Neurotechnology”).

But how is it possible to read our brain? Well, there is still no super machine that enters a person’s head and provides a complete list of ideas and concepts, as there is in science fiction.

LOOK: Opportunity or threat? How journalists view artificial intelligence according to a study

But in fact, Farahany explains that the defenses of our privacy of thought have begun to be torn down without the need to directly examine the brain.

The data era

This is possible thanks to the large amount of personal data that we share on social networks and other applications, which are then analyzed through algorithms and then monetized.

Today, technology companies have important information about us: who our friends are, what content makes us excited (and, more importantly, what kind of emotion), political preferences, what products we click on, where we go. We move throughout the day and some of our financial transactions.

“All of this is being used by companies to create very precise profiles about who we are and thus understand our preferences and desires.“says Farahany in an interview with BBC News Brazil.

“It’s important for people to understand that they are already in a world where minds are read,” he says.

LOOK: TikTok implements labels for users to flag content created with AI

With the growing popularity of smartwatches – which collect data on heart rate, stress levels, sleep quality and much more – another frontier is beginning to be explored, that of our inner workings.

But the advance of neurotechnology and equipment that comes into direct contact with the head takes all this to a new level, one with more data and greater precision.

The professor explains that the brain sensors are precisely similar to the heart rate sensors found in smart watches or rings that measure body temperature when they capture electrical activity in the brain.

“And every time you think, or every time you feel something, neurons activate in your brain, emitting small electrical discharges. Characteristic patterns can be used to draw conclusions,” he says.

“For example, if you see an advertisement and feel joy, stress, anger, boredom, engagement… all these reactions can be captured through the electrical activity of your brain and decoded with the most advanced artificial intelligence,” he adds.

That is, these brain signals transmit what we feel, observe, imagine or think.

Farahany says people need to understand and accept that their brains “are not entirely their own.”

Something that leads to questioning the concept of free will, that is, the power of an individual to choose their actions.

“Imagine that at the beginning of the week you propose not to spend more than one hour a day on social networks. In the end you discover that you spent four hours a day. What happened?” reflects the professor.

“If there are algorithms designed to catch you when you want to disconnect, if you receive notifications when you spend too much time away from your cell phone, if you want to watch only one episode of the series and the next one starts automatically, could you really use your free will? They are tools and techniques designed to undermine what you have committed to.”

“Technology itself is rarely the problem”

Farahany, contrary to what one might think, is a great enthusiast of advances in neurotechnology.

Throughout his book, he lists a long list of contexts in which brain monitoring could improve humanity and save lives.

“What I propose is a balance. It is both a way for people to see the positive aspects of technology, but also to protect themselves against the larger risks.“, it states.

“To get there, we need to change the way we think about our relationship with technology. Technology is rarely the problem. “It’s almost always a misuse.”

LOOK: Alexa will be smarter: Amazon reinforces its assistant with generative AI

“It is not about adopting absolute positions like ‘all this is bad’ or ‘all this is great’, but about trying to define what the functionalities of this technology are for the common good and what the risks of its misuse are,” he adds. .

The list is full of complex cases and double-edged swords.

Neurotechnology could reduce the number of fatal accidents by monitoring levels of inattention and, mainly, fatigue that affects truck drivers and train and subway drivers, for example.

This same functionality can be abused by a company or school in search of total productivity, in which moments of distraction by an employee or student are monitored, recorded and eventually punished.

A bracelet that captures electromagnetic waves sent by the brain to move arms and hands could transform these impulses into electronic signals and make digital or virtual reality experiences much more intuitive and integrated.

And there is even more potential in this device: detect the early stages of a neurodegenerative disease. Analyzing brain activity as a whole could represent a breakthrough for medicine and longevity.

On the other hand, Farahany writes in the book, the same bracelet will also detect “if you are performing an intimate activity using your hands in your bedroom.”

And all this data in the hands of governments?

But for Farahany, the biggest concern regarding individual privacy is that governments possess an increasingly wide range of personal data.

She reports that the US Department of Defense funded a company that developed a biometric system that combines data from brain waves, cognitive states, facial recognition, pupil analysis and changes in the amount of sweat produced.

In China, a report published in 2018 in the South China Morning Post reported that workers from various branches and members of the country’s military forces were already using brain wave monitors to detect emotional peaks such as depression, anxiety or anger.

In addition to its use to improve the performance and therefore the financial results of companies, the report states that the project also sought to “maintain Chinese social stability.”

Farahany says that in most countries, privacy laws do not explicitly address the right to mental privacy.

“I believe that the United Nations must move towards recognizing what I call the ‘right to cognitive freedom’.‘. “A universal right that would direct us toward an upgrade to privacy, one that explicitly says there is a right to mental privacy, a right to be protected from interference in the way we think and feel.”

She says that today “freedom of thought” is applied and understood to refer strictly to freedom of religion and belief.

“I think we need to expand this understanding to have protection against thought interference, manipulation and punishment.”

The problem is that technology always advances faster than the debate and approval of legislation, and companies and governments take advantage of legal loopholes.

“It’s really about trying to determine as early as possible and, also as the technology evolves, what the benefits and risks are. And then clarifying what’s at stake and developing a regulatory regime that addresses that. That’s not always easy to do,” Farahany acknowledges.

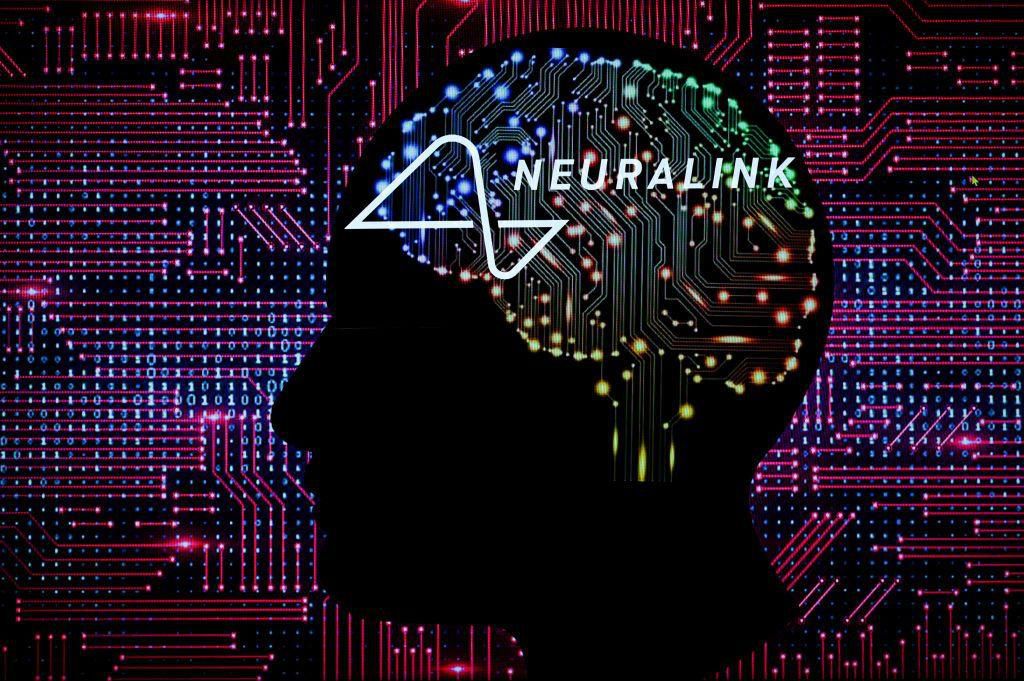

Elon Musk’s project

The most recognized neurotechnology project has several controversial elements: it involves the implantation of a chip in the brain and is led by Elon Musk, a character who frequently makes headlines, often due to controversies.

One of his companies, Neuralink wants to implant this type of devices in the most complex human organ in the future to cure diseases such as Alzheimer’s. and allowing people with neurological diseases to control mobile phones or computers with their minds.

Some experts in the field express concern about the project, raising doubts about the implications of this type of technology developed by a for-profit company.

Last May, the FDA, the US agency that controls food and drugs, authorized the first human test.

“I’m not that worried about Musk’s project. In fact, I’m kind of optimistic about it,” Farahany says.

“Neuralink promises two innovations. The first is to do surgeries through robots, which would perform the most delicate and difficult parts of the operation [implante de neurotecnología]. The second was the development of electrodes the size of a hair that could be implanted with much less risk to the human brain.”

Today, few surgeons in the world have the skills to perform a procedure like this.

“If I became severely disabled to the point where I could no longer communicate or move, I would probably seek the opportunity to have some type of neural technology implanted,” the expert concludes.

Source: Elcomercio

I have worked in the news industry for over 10 years. I have a vast amount of experience in writing and reporting. I have also worked as an author for a number of years, writing about technology and other topics.

I am a highly skilled and experienced journalist, with a keen eye for detail. I am also an excellent communicator, with superb writing skills. I am passionate about technology and its impact on our world. I am also very interested in current affairs and the latest news stories.

I am a hardworking and dedicated professional, who always strives to produce the best possible work. I am also a team player, who is always willing to help out others.

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/RQYPTRRF3REKBMCJPDYRZPE3RU.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/6ZQHAOYQDZF6FEBA3QKHRLDQP4.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/I27EWYUBX5DEFA4BOGONEOSE2A.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/D4DZCUS335GM5HOWRQYCRNDTJU.jpg)